Key Takeaways

- Models reliably handle simple retrieval — pulling reported numbers, summarizing filings, comparing against guidance — but fall off sharply on multi-step modeling and precedent work.

- Even under Partial Credit scoring, no model clears 52% overall. All-Pass strips another 11-13 points off every model — no model clears 40%.

- There is substantial room for improvement: no single model leads on all nine categories, and the hardest categories — Financial Modeling and Precedents — sit well below the rest.

Background

Finance Agent v2 builds on Finance Agent v1.1 with 927 expert-reviewed questions across public, private validation, and held-out test splits.

Automating analyst-grade financial research is valuable because the work is expensive, repetitive, and time-sensitive: analysts often need to move from filings and transcripts to a defensible model or investment memo under deadline. It is also difficult because the answer usually depends on finding the right source, applying the right finance convention, and carrying precise intermediate numbers through several steps.

Agents have access to a fixed toolkit: edgar_search (SEC EDGAR API), web_search, parse_html_page (download an HTML page), retrieve_information (query over fetched HTML), calculator, and price_history. Answers are graded by a three-judge LLM jury.

Grading

Each question is decomposed into graded checks, and a subset are flagged as dealbreakers — load-bearing facts or numbers an analyst would consider non-negotiable. Failing any dealbreaker means the answer receives no credit for that question, regardless of the surrounding rubric. Two metrics are reported:

- Partial Credit (primary): the dealbreaker-gated, severity-weighted average of per-check scores. A response with a correct dealbreaker but a few peripheral misses scores below 100%; a response with any failed dealbreaker scores 0%.

- All-Pass (secondary): binary per-question — 100% only if every check passes, 0% otherwise.

Changes from v1.1

- Step up in difficulty. Even the best model clears only 52% under Partial Credit and under 40% under All-Pass. Questions demand deeper multi-step synthesis, tighter numeric precision, and more forensic sourcing.

- Expanded taxonomy. The taxonomy was significantly reorganized around real analyst workflows rather than retrieval tiers. v1.1’s easier retrieval-focused buckets (Quantitative Retrieval, Qualitative Retrieval, Numerical Reasoning, Complex Retrieval, Beat or Miss, Trends) are replaced with Comparables, Precedents, Earnings Analysis, Disclosure Analysis, and a split between General Qualitative and General Quantitative analysis.

- New grading mechanism. Dealbreaker-gated Partial Credit replaces v1.1’s flat per-question score, and All-Pass is reported alongside it as a strict secondary metric.

- Three-judge LLM jury. Replaces v1.1’s single-judge grading, reducing per-judge noise on borderline answers.

- Stricter numeric tolerances. Tighter thresholds on rounding and precision drift — answers that previously passed under v1.1’s looser tolerance now fail.

- Expanded harness. Adds

calculatorandprice_historyon top of the v1.1 tools. - Multi-run aggregation. Every model is run three times; reported scores are mean-of-runs with standard error of the mean.

- Expanded test set. Larger held-out test split for tighter measurement.

Results

The Pareto chart above illustrates how model accuracy trades off against cost and latency. The three leaders cluster within a point of each other on accuracy, but differ noticeably in latency and cost. GPT 5.5 leads in acurracy, but its edge is minimal and it is both the most expensive and among the slowest of the three. Claude Opus 4.7 matches its accuracy in roughly half the wall-clock time, while Claude Sonnet 4.6 matches it at a noticeably lower per-task cost.

The mid-tier sits only a few points below the leaders at a fraction of the cost, suggesting the accuracy premium at the frontier is modest relative to the price gap. Latency is the noisier axis — some mid-tier models match frontier wall-clock times, while others take several times longer.

Where Models Stand

The retrieval-and-summarization categories cluster at the top: Earnings Analysis, General Quantitative, and General Qualitative all clear 70%. Disclosure Analysis and Market Analysis sit in the mid-60s. Adjustments and Comparables drop into the 45-50% band as cross-document reconciliation gets harder. Financial Modeling and Precedents collapse to the low 20s, where multi-step synthesis and chained arithmetic break down.

The 11-13 point drop is consistent across the leaderboard. Frontier models clear ~39% of questions cleanly; mid-tier models land in the low 30s; the worst performing models fall into the mid-teens.

The tool-call analysis shows turns, tool calls, and errors per model. Higher-performing models use tools more selectively.

The radar graph shows the per-model breakdown of tool calls across the six tools.

Calculator is the dominant tool for most frontier models, accounting for 30-50% of their calls — but the retrieval mix varies sharply. Higher-performing models tend to spend more of their tool budget on numerical resolution after finding the relevant source material. Lower-performing models often lean harder on web_search and retrieve_information, making more exploratory calls that do not translate into correct answers. Price history sits at 4-11% across the board, matching its narrower role in Market Analysis questions.

Model Output Examples

Q

Did NYSE: CNC have to pay a rebate to policyholders in fiscal year 2025 or fiscal year 2020? If not, was the company closer to owing customers a rebate in 2025 or 2020? What were the medical loss ratios in each year?

Short answer: **on Centene’s consolidated medical-loss-ratio/health-benefits-ratio basis, no — neither FY2025 nor FY2020 was below the ACA minimum MLR threshold that would imply a rebate trigger. FY2020 was closer to owing rebates because its ratio was lower.**

Centene reports this metric as the **health benefits ratio (HBR)**, which it defines as medical costs as a percentage of premium revenues, excluding separately billed premium taxes.

| Fiscal year | Medical costs | Premium revenue | Reported / computed MLR-HBR | Rebate-threshold conclusion |

|---|---:|---:|---:|---|

| **2025** | $157,702 million | $171,556 million | **91.9%** | Above 80%/85% thresholds |

| **2020** | $86,264 million | $100,055 million | **86.2%** | Above 80%/85% thresholds, but much closer |

Calculations:

- FY2025: $157,702 / $171,556 = **91.92%**, reported as **91.9%**.

- FY2020: $86,264 / $100,055 = **86.22%**, reported as **86.2%**.

Because ACA MLR rebates are generally required when an insurer fails to spend at least **80% or 85%** of premium dollars on medical care/quality improvement, both consolidated ratios were above even the stricter 85% benchmark. Using 85% as the reference, Centene was:

- **6.90 percentage points above** the threshold in 2025; and

- **1.20 percentage points above** the threshold in 2020.

So, **FY2020 was closer to the rebate threshold**.

Important caveat: actual MLR rebates/return-premium obligations are calculated at regulated plan/market/contract levels, not simply from the companywide HBR. Centene’s 10-Ks did disclose MLR/return-premium payables in both years, including ACA “minimum medical loss ratio” payables of **$294 million** at Dec. 31, 2025 and **$238 million** at Dec. 31, 2020. Therefore, if the question is asking whether the filings show any plan-level MLR/return-premium liabilities, the answer is **yes, in both years**; but if the question is asking whether the consolidated MLR itself was low enough to imply a rebate, the answer is **no**, and **2020 was closer**.

{

"sources": [

{

"url": "https://www.sec.gov/Archives/edgar/data/1071739/000107173926000049/cnc-20251231.htm",

"name": "Centene Corporation FY2025 Form 10-K"

},

{

"url": "https://www.sec.gov/Archives/edgar/data/1071739/000107173921000039/cnc-20201231.htm",

"name": "Centene Corporation FY2020 Form 10-K"

},

{

"url": "https://www.cms.gov/marketplace/private-health-insurance/medical-loss-ratio",

"name": "CMS — Medical Loss Ratio"

}

]

}

100

All checks passed.

The question above is a General Quantitative Analysis task: deciding whether Centene owed a rebate to policyholders in either fiscal year based on the medical loss ratio threshold, and reporting the underlying MLRs.

Trajectory Comparison

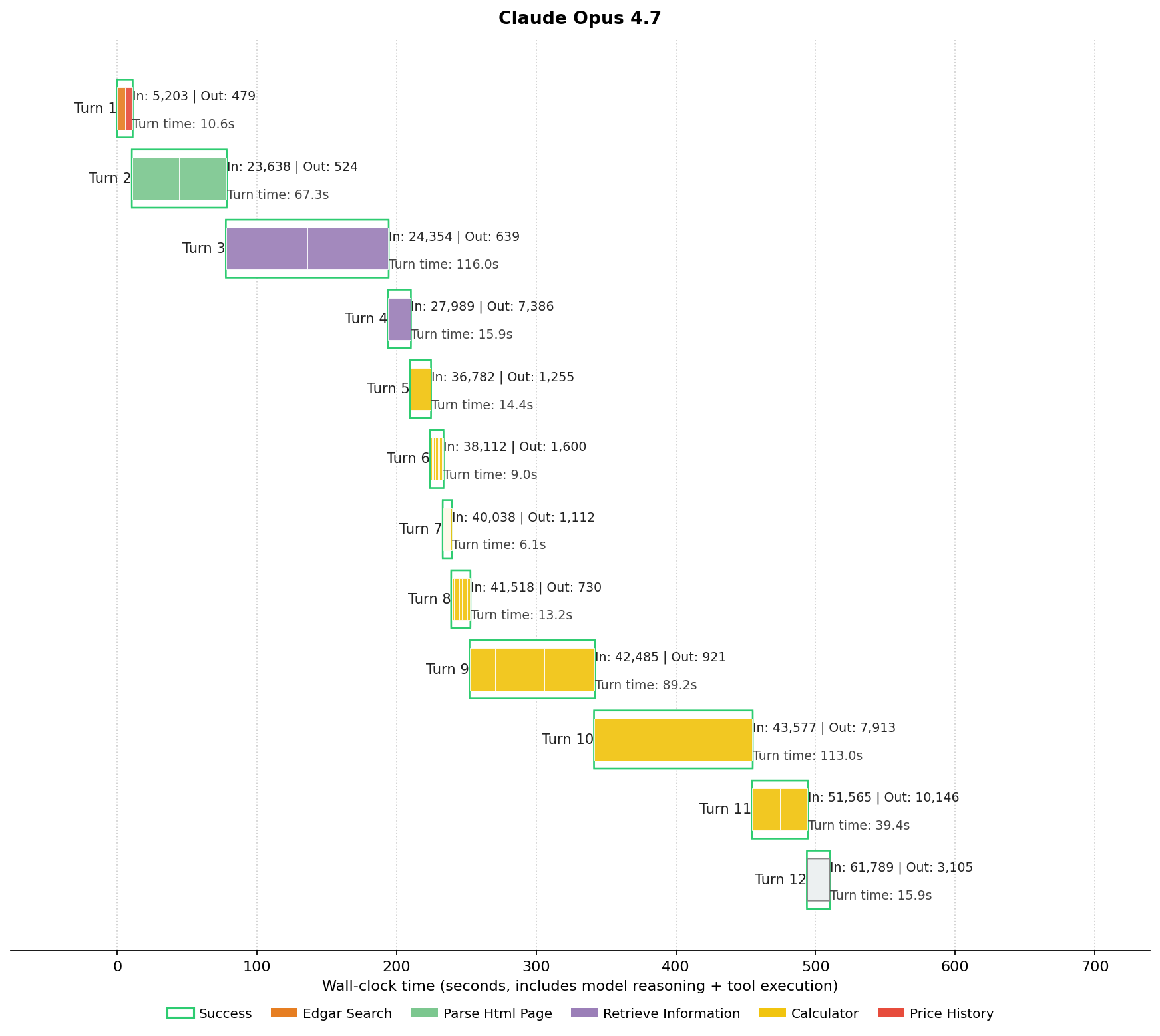

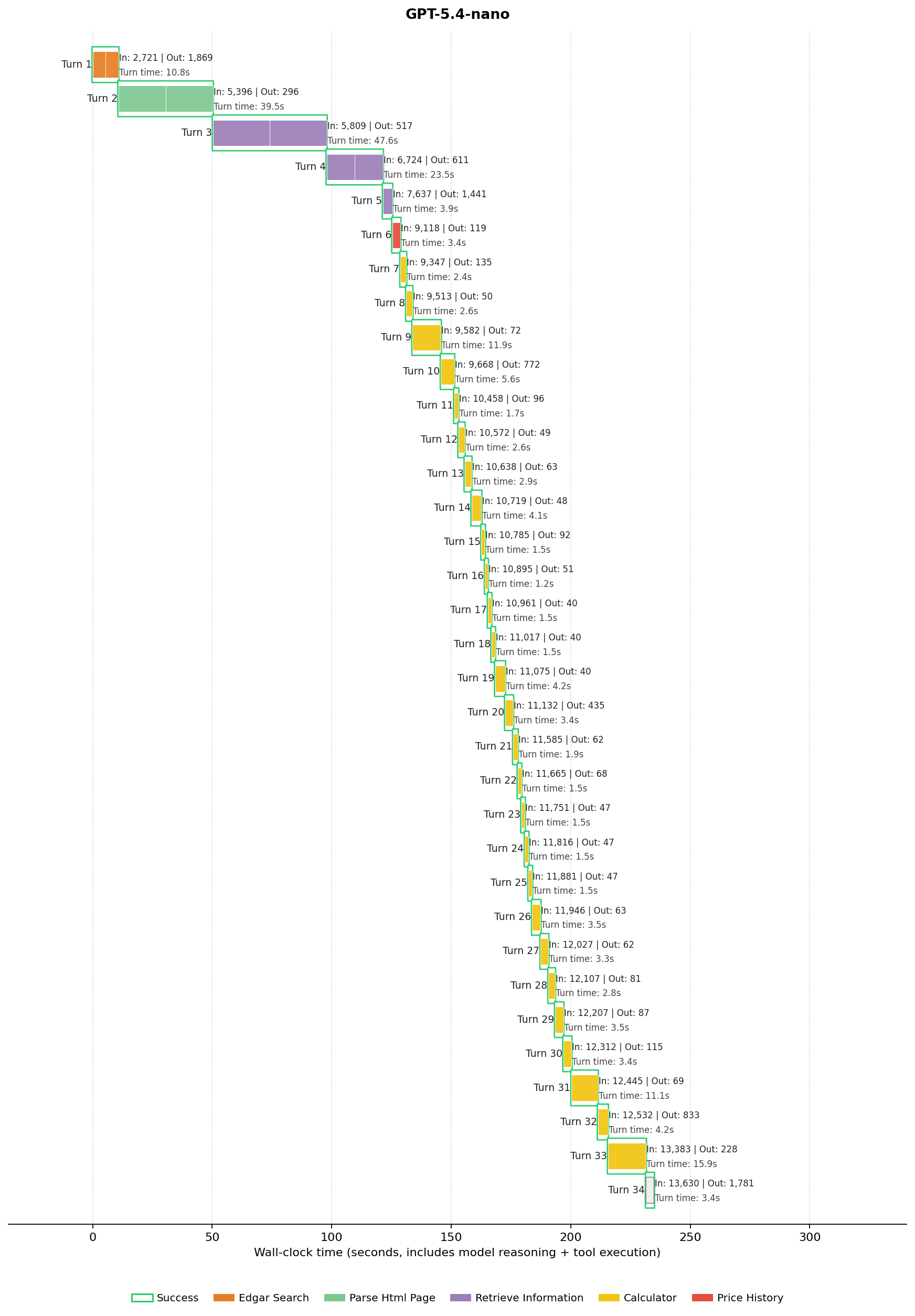

The visualizations below show how a frontier model and a smaller, cheaper model approached the same Financial Modeling question — building a standalone DCF model with mid-year convention from two annual reports plus market data. Each row is one agent turn; bar width is wall-clock time per turn (model reasoning + tool execution).

Claude Opus 4.7 trajectory (passed most checks):

The frontier model worked through the question in 12 turns: pull market data, fetch and parse the two filings, run retrieve_information to extract the inputs needed for the DCF, then step through a few tight bursts of parallel calculator calls to compute projections, terminal value, and discounted cash flows before submitting.

GPT 5.4 Nano trajectory (zeroed on most checks):

The smaller model needed 34 turns to reach an answer of similar shape — nearly 3× as many. After the retrieval phase, it hammers the calculator one operation at a time for the next 27 turns rather than fanning out parallel calls, and the resulting numbers land close to the rubric’s targets but not exactly enough to score.

Methodology

Question Design

Questions target the analytical depth expected of a 2nd or 3rd-year investment banking analyst. Each question must satisfy four criteria:

- Determinism. A single, unambiguous correct answer with no room for competing interpretations.

- Multi-source synthesis. Answers require chaining information across multiple filings or data sources rather than a single lookup.

- Domain specificity. Questions require implicit industry knowledge — normalizations, reclassifications, and accounting adjustments that follow sector convention rather than explicit instruction.

- Forensic precision. Decisive information is frequently buried in footnotes, MD&A caveats, or accounting policy disclosures. Surface-level summarization is insufficient.

Dataset

The dataset is divided into three parts: Public (27 open-source samples), Private Validation (450 samples available for license), and Test (450 samples).

- The Public set and agent harness is fully open and can be accessed here.

- The Private Validation set is available for license. Interested parties are encouraged to contact us directly for access.

- The Test set will remain private permanently. All results reported on this page are based solely on the Test set to prevent potential overfitting.

The dataset splits were sampled to preserve the distribution of question categories and difficulty.

Question Taxonomy

Finance Agent v2 organizes questions into nine analytical categories reflecting real equity-research workflows.

General Qualitative Analysis

Summarization and comparison of fundamental filing sections: business model, risk factors, MD&A, and standard disclosures across companies.

Compare Walmart, Costco, and Target’s capital allocation priorities across capex, dividends, share repurchases, and debt management.

General Quantitative Analysis

Extraction and calculation of reported financials such as revenue growth, CAGR, leverage ratios, and executive compensation — often requiring verification against restated historicals.

Compare Home Depot and Lowe’s FY2024 inventory efficiency and calculate the difference in days inventory outstanding.

Market Analysis

Relative trading performance, total shareholder return, and how news cycles or guidance shifts drive stock volatility relative to sector indices.

Measure Sun Communities’ stock reaction after the announced sale of Safe Harbor Marinas, then relate the move to the company’s stated use of proceeds.

Comparables

Building trading comps tables, calculating EV multiples, and normalizing enterprise value across peers by adjusting for off-balance-sheet items buried in footnotes.

Rank major U.S. banks by excess CET1 ratio relative to their regulatory minimums.

Precedents

Analyzing M&A transaction multiples from S-4 filings and target financials, with industry-specific EBITDA normalization (e.g. exploration expense add-backs in Oil & Gas).

Extract enterprise values and EV/EBITDA multiples for recent industrial distribution acquisitions and rank the transactions by pre-synergy multiple.

Adjustments

Bridging GAAP to non-GAAP or pro forma figures by reconciling SBC, acquired intangible amortization, and other non-cash items across the P&L and cash flow statement.

Reconcile Honeywell’s GAAP operating income to segment profit across annual releases and identify newly introduced adjustment categories.

Earnings Analysis

Comparing reported results against consensus estimates and prior guidance, including non-GAAP reconciliations across consecutive quarterly reporting cycles.

Compare Rapid7’s Q3 2025 actuals against prior revenue, non-GAAP operating income, and ARR guidance.

Disclosure Analysis

Tracking shifts in MD&A language, KPI definitions, and segment reporting methodology across multiple annual filings, then restating prior periods to reflect the new format.

Track Boeing’s segment reporting and 787 cost-recovery disclosures across FY2022-FY2024 10-K filings.

Financial Modeling

Multi-step frameworks including DCF/NPV, LBO, and M&A accretion/dilution models built from historical ratios extracted from primary filings.

Assess whether Ralph Lauren could justify a distressed acquisition of Capri under stated synergy, margin, and valuation assumptions.